The latest Tesla FSD update allows drivers to text in certain contexts, raising concerns from regulators and safety experts regarding distracted driving laws.

Tesla has once again positioned itself at the center of the automotive safety discourse following confirmation from Chief Executive Elon Musk that the company’s latest software iteration, the Tesla FSD update version 14.2.1, may permit drivers to engage in text messaging while the vehicle is in motion. This development marks a pivotal shift in how the electric vehicle manufacturer manages driver attention, moving away from strict, continuous alerts toward a system that adapts to the driving environment.

Musk acknowledged this operational change after a user observed that the vehicle no longer issued standard warnings when they utilized their mobile device while Full Self-Driving (FSD) was engaged. According to Musk, the suppression of these alerts is not a glitch but a feature predicated on “the context of surrounding traffic.” While the CEO did not provide a granular technical breakdown of how the software’s algorithms quantify safety to determine when texting is permissible, the implication is clear: Tesla is confident that its system can interpret road conditions well enough to allow the human operator a degree of disengagement previously deemed unsafe.

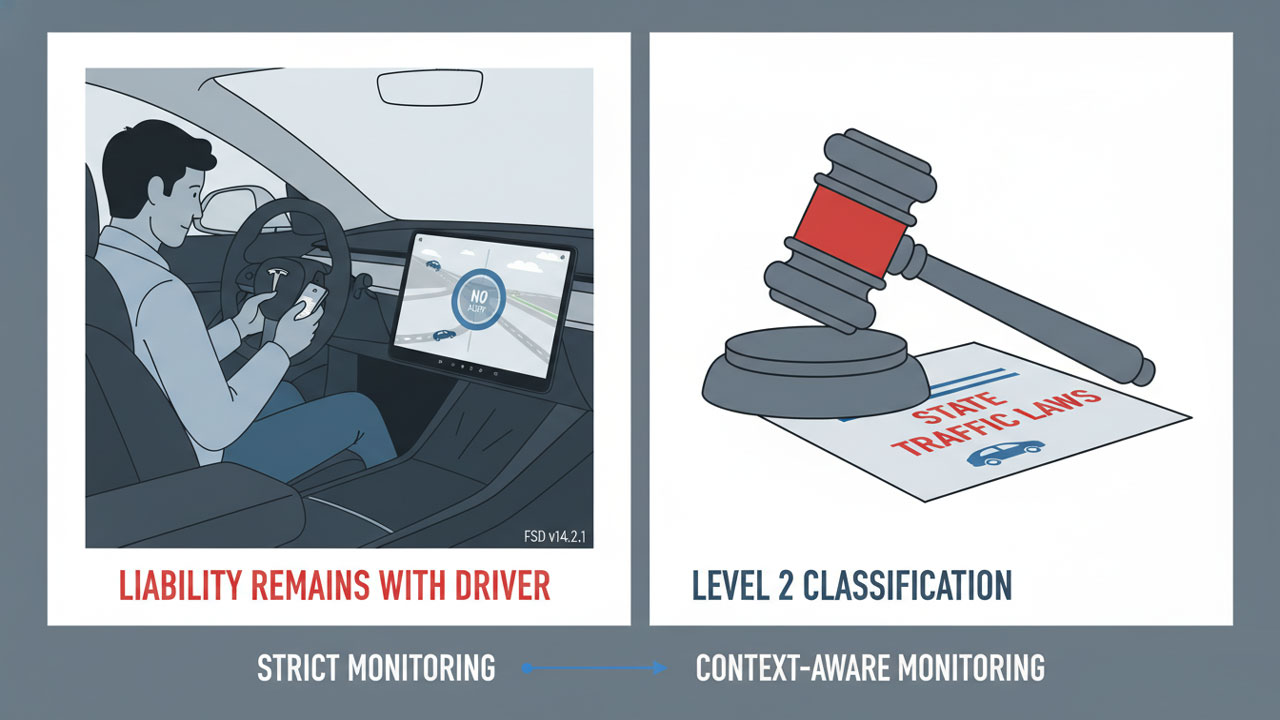

This adjustment is significant not merely as a software patch, but as a philosophical divergence from standard industry practices regarding distracted driving management. FSD remains officially categorized as a driver-assistance system, specifically Level 2 under SAE International standards. This classification unequivocally mandates active, continuous human supervision. By introducing a “context-aware” allowance for phone use, Tesla is effectively continuously redefining the operational boundaries of a Level 2 system, a move that places the company at odds with established safety protocols and regulatory expectations.

The Intersection of Technology and Public Safety

The timing of this update is critical. Tesla continues to aggressively promote a long-term vision of autonomous mobility, suggesting that a future of hands-free, eyes-off driving is imminent. By enabling texting in specific scenarios, the company appears to be signaling a renewed, robust confidence in the system’s ability to navigate complex driving tasks with increased independence. However, this technological confidence is surfacing profound concerns among safety experts and policy analysts who monitor road safety statistics.

Distracted driving remains one of the preeminent causes of road fatalities in the United States. For decades, safety advocacy groups and state legislatures have worked to stigmatize and outlaw the use of handheld devices behind the wheel. Experts argue that allowing phone use, even selectively, risks validating a behavior that public policy has spent years attempting to eliminate. The concern is that if a vehicle explicitly permits a driver to text, it creates a psychological permission structure that may bleed into situations where the software is not capable of handling the risk.

Analyzing the Regulatory Friction

The Tesla FSD update does not exist in a vacuum; it has been released into a highly charged regulatory atmosphere. The rollout immediately intensified the scrutiny from federal regulators who are already closely monitoring Tesla’s deployment of automated systems. The National Highway Traffic Safety Administration (NHTSA) currently maintains an active investigation into FSD. This federal inquiry was precipitated by reports of vehicles allegedly behaving erratically—specifically running red lights, drifting into opposing lanes, and struggling with visibility challenges during nighttime or low-light conditions.

These investigations are indicative of a broader, systemic trend wherein safety authorities are increasingly questioning the efficacy and safety of “beta-testing” software on public roads. The central question for regulators is whether Tesla’s iterative approach exposes drivers, pedestrians, and other road users to unnecessary and potentially avoidable risks. The introduction of a feature that relaxes restrictions on phone use adds a new, complex layer to this ongoing debate. It forces regulators to ask whether software that technically permits illegal behavior (texting while driving) can coexist with federal safety mandates.

Tesla FSD update

| Feature/Issue | Status Prior to Update | Status in FSD v14.2.1 | Regulatory/Legal Context |

|---|---|---|---|

| Phone Use Policy | Strictly monitored; alerts triggered by phone usage. | Context-dependent; alerts suppressed in certain traffic conditions. | Illegal in most states; violates “hands-free” laws. |

| System Classification | Level 2 (Driver Assistance). | Level 2 (Driver Assistance). | Requires constant human supervision. |

| Driver Monitoring | High sensitivity to eye/head movement. | Variable sensitivity based on environment. | Monitoring is standard for Level 2 systems. |

| Liability | Driver is 100% liable. | Driver is 100% liable. | Manufacturers generally assume liability only at Level 3+. |

| Regulatory Status | Under NHTSA Investigation. | Scrutiny Intensified. | Subject to potential recalls or software patches. |

Legal Tensions and the Liability Paradox

Musk’s announcement exposes a sharp tension between technological innovation and existing legal frameworks. Across the United States, almost every state enforces bans on texting while driving, and many jurisdictions prohibit any form of handheld phone use regardless of the reason. The law is unequivocal: it identifies the person sitting in the driver’s seat as the responsible operator of the vehicle.

This creates a precarious legal paradox for Tesla owners. Even if the Tesla FSD update deems the environment safe enough for the driver to look down at a phone, state troopers and local police utilize a different standard—the traffic code. A driver utilizing this feature risks citation, fines, and points on their license. Furthermore, in the event of a collision, the driver remains fully liable for civil and criminal damages. The software’s permission to text offers no legal immunity.

Tesla has repeatedly stated in disclaimers and manuals that FSD is not autonomous. However, critics and legal experts argue that the company’s messaging—and feature sets like this one—often blur the distinction for the average consumer. By technically enabling a behavior that is legally prohibited, the software sends a mixed message that may complicate liability claims and insurance disputes.

State-Level Pressures: The California Case

Beyond federal oversight, Tesla faces significant pressure at the state level, particularly from the California Department of Motor Vehicles (DMV). The agency has been pursuing legal action against the automaker since 2022. The core of the DMV’s argument is that Tesla has misrepresented the capabilities of both its Autopilot and FSD systems in its marketing materials. The agency alleges that the branding itself—using terms like “Full Self-Driving”—could mislead buyers into believing the vehicles are fully autonomous when they are not.

The stakes in California are high. Regulators in the state are considering penalties that could, in theory, temporarily suspend Tesla’s license to manufacture or sell vehicles in California for at least 30 days. Such a decision would have massive financial and reputational implications. A ruling on this case is expected soon, and the introduction of the new texting-while-driving capability may influence those deliberations. It raises further questions about how Tesla communicates safety expectations to its customers and whether the vehicle’s design encourages over-reliance on the technology.

Diverging Industry Philosophies

These developments are occurring amidst a broader industry-wide conversation regarding the regulation of Advanced Driver-Assistance Systems (ADAS). Traditional legacy automakers generally adopt a conservative approach to Level 2 automation. Systems such as GM’s Super Cruise or Ford’s BlueCruise often utilize infrared cameras to ensure the driver’s eyes remain on the road, even when hands are off the wheel. If the driver looks away or picks up a phone, these systems typically disengage or issue escalating warnings.

Tesla’s approach—relying on software-based monitoring that uses cabin cameras to track head position and eye movement—has been criticized by some safety researchers. They argue that humans are fundamentally poor supervisors of automated systems. The “vigilance decrement,” a psychological phenomenon where human attention wanes during passive monitoring, makes it difficult for drivers to intervene suddenly when a system fails. Critics contend that by reducing alerts, Tesla is exacerbating this psychological vulnerability, encouraging drivers to check out mentally when the system appears to be functioning smoothly.

Inside the Real-World Test

To understand how the Tesla FSD update functions outside of corporate press releases, a reporter conducted a real-world test using a 2024 Tesla Model 3 equipped with FSD version 14.2.1. The test took place over a roughly seven-minute drive through Silicon Valley, a region known for its mix of suburban avenues and tech-centric traffic patterns.

During this drive, the reporter was able to continuously use their phone, type messages, and interact with the device without receiving the typical, piercing alerts that previously penalized distracted behavior. The vehicle navigated a variety of standard obstacles: residential streets, parked cars along the curb, oncoming vehicles, and tight navigation spaces. Throughout the seven-minute interval, the car managed these tasks with no major mechanical issues, completing the route smoothly.

However, the system was not entirely passive. The car still issued periodic reminders, such as requests for steering-wheel pressure—often referred to as the “nag.” It also provided occasional warnings designed to ensure the driver remained somewhat engaged. These alerts demonstrate that although Tesla has loosened certain restrictions regarding phone use, the system retains a baseline level of driver-monitoring enforcement. It is not a complete abdication of supervision, but rather a recalibration of the threshold for intervention.

The Risk of Complacency

The test highlighted a deeper, more insidious issue regarding human-machine interaction: complacency. The car performed competently in low-to-moderate complexity environments even when the driver’s attention was divided. This success is, paradoxically, a safety risk. If a driver feels overly confident because the car handles a quiet suburban street well while they text, they may maintain that behavior as they enter a construction zone or a high-speed highway merge—situations where split-second human reaction is vital.

Researchers have noted that while camera-based monitoring can be effective, it is not foolproof. When software updates reduce the frequency or intrusiveness of alerts, it conditions the user to trust the machine implicitly. Reducing phone-use warnings could lead some drivers to believe that the car is fully capable of handling emergency maneuvers independent of human input. Experts warn that this perception can lead to delayed human intervention during critical moments, the exact milliseconds that determine whether a near-miss becomes a collision.

It is important to contextualize the reporter’s test. Although the brief drive showed no performance failures, it did not represent the full spectrum of driving challenges. It did not cover highway speeds, complex multi-lane intersections, or unpredictable conditions such as heavy rain or active construction zones. Regulators often stress that a technology functioning correctly during short, controlled intervals does not guarantee safety over longer, more varied driving experiences. This distinction is crucial for systems like FSD, which are still evolving and depend heavily on continuous real-world data collection to improve their neural networks.

A Pivotal Moment for Autonomous Ambitions

Tesla’s decision to soften rules around texting while driving is more than a minor software tweak; it acts as a bellwether for the future of the automotive industry. It signals the company’s growing push toward a future where drivers may increasingly rely on automated systems and where the boundary between driver and passenger becomes increasingly ambiguous.

For supporters of the brand and the technology, the update serves as a showcase of Tesla’s progress. It suggests that FSD’s ability to “understand” complex traffic environments has reached a level where it can tolerate brief lapses in human attention. For critics, however, it illustrates the inherent risks of expanding driver freedom before the technology is statistically proven to be as safe, or safer, than a vigilant human driver.

The change forces a difficult public conversation about responsibility and risk. Even if Tesla believes FSD can safely manage certain tasks while the driver is distracted, the legal system currently does not recognize any vehicle on U.S. roads as fully autonomous. Until laws evolve to accommodate Level 3, 4, and 5 autonomy, drivers remain accountable for their actions. This means that texting while using the Tesla FSD update could still result in severe legal consequences.

The broader implications of this update extend far beyond Tesla’s customer base. This decision could influence how regulators think about partial automation, how insurers evaluate liability and premiums, and how the general public perceives automated driving technology. If Tesla continues to roll out features that assume higher system reliability, lawmakers may feel pressure to update traffic codes to specifically address semi-autonomous operation. Conversely, safety organizations may amplify warnings against complacency, pushing for stricter enforcement of existing laws.

Ultimately, this update underscores the persistent tension between rapid technological innovation and the public-safety frameworks that evolve far more slowly. As the company pushes further into the realm of autonomy, society will have to reckon with the trade-offs involved. Determining how much freedom drivers should have while machines take partial control is a complex equation involving engineering, law, and human psychology. The new texting capability in FSD v14.2.1 is likely only the beginning of a much larger, multi-year debate about the future of human-machine responsibility on the road.